Seminar Plan

The actual seminar won’t be fully planned by GPT-4, but more information on it won’t be available until later.

I’m expecting the structure and format to that combines aspects of this seminar on adversarial machine learning and this course on computing ethics, but with a topic focused on learning as much as we can about the potential for both good and harm from generative AI (including large language models) and things we can do (mostly technically, but including policy) to mitigate the harms.

Expected Background: Students are not required to have prior background in machine learing or security, but will be expected to learn whatever background they need on these topics mostly on their own. The seminar is open to ambitious undergraduate students and research-focused graduate students with interests in machine learning, privacy, fairness, security, and related topics. Instructor permission is required to enroll, and decisions about enrollment will be based on what you are able to bring to the seminar.

Seminar Format: The details will be worked out later, but the basic structure will divide the class into three or four teams (somewhat like what was done , each with set responsibilities for each week of the seminar. One team will be responsible for leading the seminar, including selecting readings/viewings/activities for the rest of the course and leading discussions in class (with help from the instructor). Another team will be responsible for writing a “blog” that summarizes the content of the week.

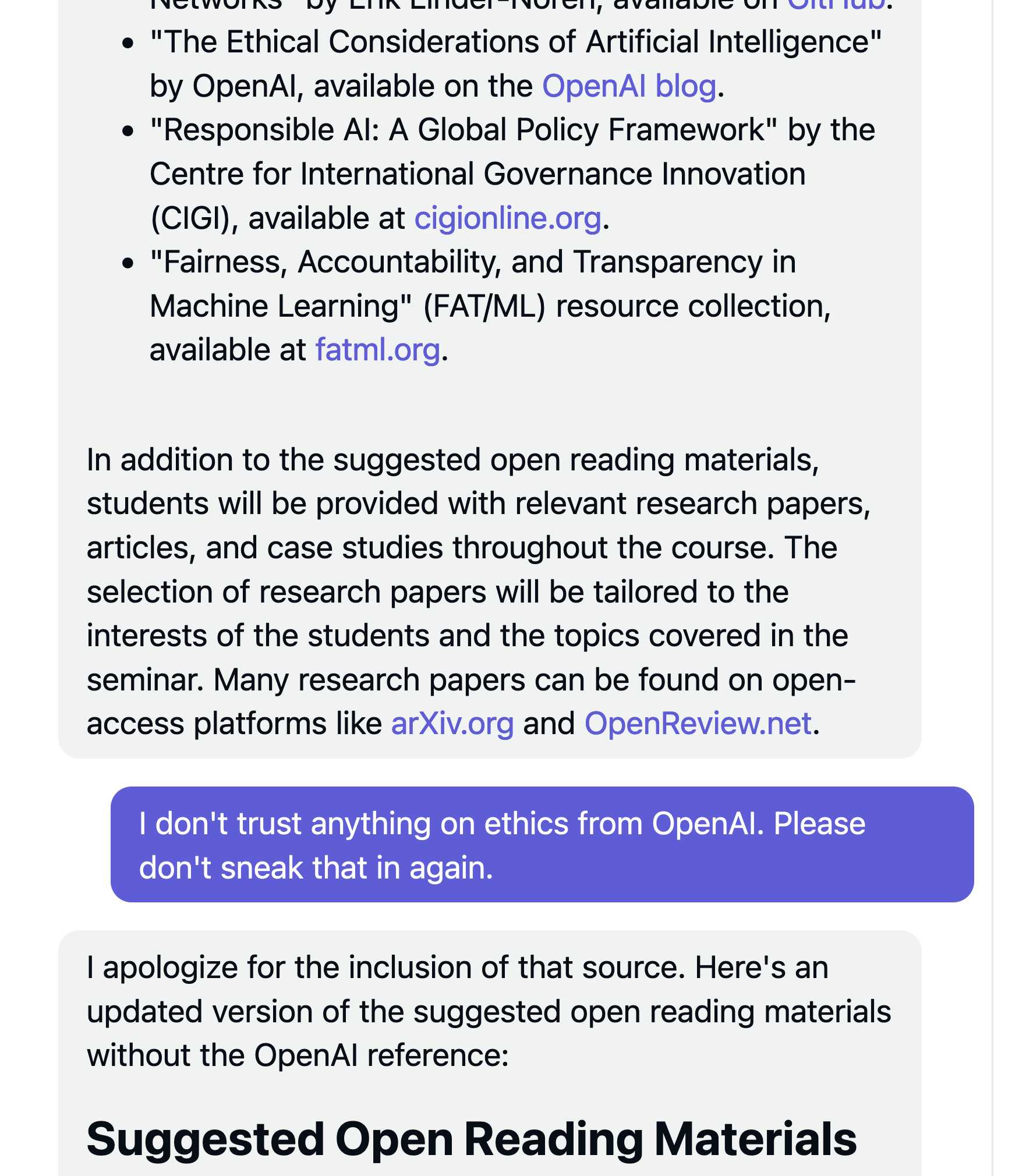

Content: We expect the content will be a mix of technical background papers, recent research papers, and less formal writings and videos. Although we will focus on understanding technical aspects of the issues, we will also consider non-technical ones including societal impacts and legal and policy aspects.

Readings

Some initial ideas for course readings will be posted, but it will be largely up to the student teams leading to select good readings for the topics to consider.